Learn Moodle: Step by Step or All at Once?

A project for the Edinburgh Napier MBOE on:

Factors affecting choice of access to content in

a “Learn Moodle” MOOC and their effect on MOOC completion rates

Methodology

General overview of data and reporting available

Data and reporting available depends on the role and permissions in a course and in the Moodle site. It also depends on whether the site relies only on standard reports or has access to any non-standard, contributed report plugins or reports available via direct database access.

Administrator reports:

From within the admin interface of a Moodle site, an administrator can access site and course logs for all users, showing when they logged in and out and what actions they took. Course reports are the same as those available to a teacher. (See the section below: 'Teacher (Facilitator) reports').

Additionally, if enabled, an administrator can access and manage Analytics Models, a feature of Moodle which generates predictions based on indicators, as outlined in the Moodle documentation on Analytics (Docs.moodle.org, 2019) Of these the most useful to MOOC facilitators would be the model "Students at risk of dropping out". Another model "Upcoming activities due" could also provide notifications to prompt learners to stay on track.

If the Moodle site host permits the addition of contributed plugins, there is a wide range of reporting and analytics tools, free and at a cost, which can assist in identifying trends in the course. Moodle itself is partnered with Intelliboard (Intelliboard.net, 2019) which provides customisable dashboards for admins, teachers and learners with reports not available as standard in Moodle, such as a learner's progress in relation to that of others and to the course average. There is a popular, free plugin, Configurable reports which allows admins and regular course teachers to create custom reports without SQL knowledge.

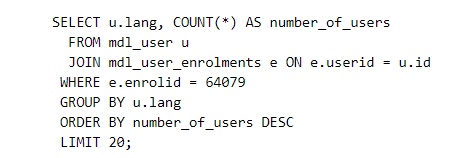

If the Moodle administrator or the Moodle site host has access to the server, it is possible to devise SQL queries and create reports which can contain further information not available in standard course reports. This offers far more possibilities for reporting since, rather than being constricted by the available reports (such as Completion reports as below) the teacher or admin comes up with the desired output of the report and then the SQL is composed to produce those figures. Thus, for the Learn Moodle Basics MOOC, it would be possible via SQL to find out the selected language of all course participants by using an SQL query similar to the one below. This example queries the language selected by participants throughout the whole Learn Moodle site:

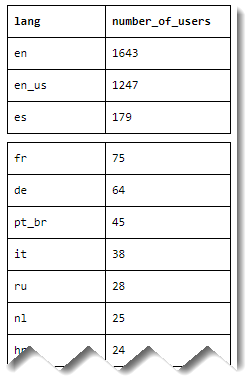

The raw output of such a database query can then look like this example (which only gives some of the data)

Teacher (Facilitator) reports

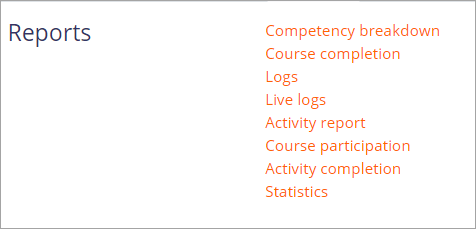

Anyone with the editing teacher role in a Moodle course can access logs and reports from the course administration area. Reports available depend on course settings and site settings. For example, the course completion report is only available if course completion has been set in a course and the Statistics and Competency breakdown reports are only available if Statistics and Competencies are enabled on the site.

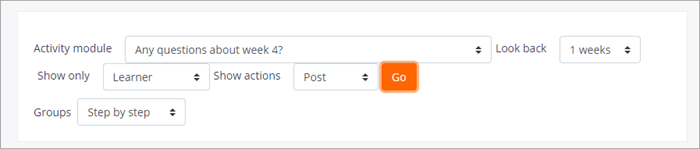

These reports provide useful information about participant engagement. For example, it is possible for a regular course facilitator to see how often (if at all) learners have contributed to a particular forum discussion, by selecting the relevant fields in the course participation report:

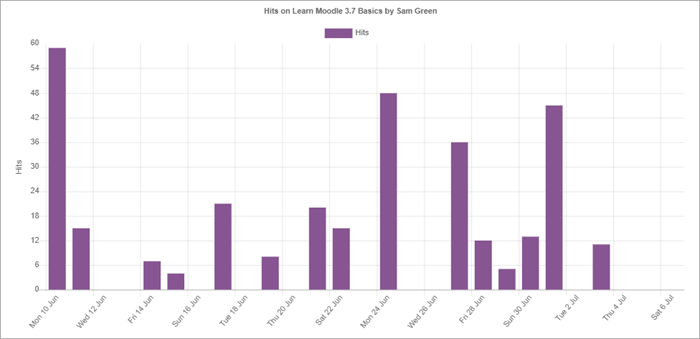

Logs and statistics about individual learners can be viewed by the facilitator from the profile of a learner. (This example is of a test learner in the 3.7 MOOC run in June 2019).

Methodology for this project:

This project uses a Mixed Methods methodology, quantitive and qualitative.

A quantitive approach was taken because of the ease with which it is possible to obtain reports from Moodle courses and because I, a facilitator on the MOOCs in question, had access to these reports. I was not able to run SQL queries, and Learning analytics were not enabled on the three MOOCs in this study.

A qualitative approach was also taken in order to learn about the motivations behind participants' choice of access to course materials. As facilitator with editing rights to the most recent MOOC I was able to add specific questions to a participant survey, requiring free text answers. The quantitative and qualitative data were then reflected upon by the co-facilitators in a paired discussion.

The data for this study comes from three sources:

- the completion reports from each of the three MOOCs, Jan 2019, Jan and June 2018. The numbers involved are as follows:

| MOOC | Total signed up | Total completed |

|---|---|---|

| Jan 2018 | 7437 | 1046 |

| June 2018 | 4957 | 793 |

| Jan 2019 | 3592 | 623 |

- a participant survey from the Jan 2019 MOOC including both mutiple choice answers and free text answers. 1127 participants completed the survey.

- a paired discussion with the co-facilitator in which she gives her impressions of the collated data.

Advantages and limitations of the data:

Moodle provides easy to download reports for teachers such as the completion report and participant survey. Such reports are available as long as the courses are still accessible. The completion reports for previous MOOCS have been used to provide a short forum post summarising the results after each run. However, the reports are limited in how refined the data can be and ideally, SQL reports run by an administrator with server access would give even more detailed information. Google Analytics (which have been used in earlier MOOCS) could give insights into the engagement and 'on trackness' of the participants of both pathways. (Olivé et al, 2019) designed and tested a learning analytics framework to identify indicators of success and failure of the Learn Moodle MOOC but this feature was not available at the time of this project. For the sake of organisational simplicity it was decided therefore to only use the standard Moodle reports.

Completion reports

Each of the MOOCs has a report option which shows, via ticks, which activities all participants have completed and which not, with a final column showing who has completed the course by obtaining completion ticks in all required activities.

These reports were downloaded for the Step by step group and for the All at once group as Excel-compatible format (CSV) and added into 6 separate worksheets of an Excel spreadsheet which was kept in a password protected folder.

By adding a "completed" filter to each worksheet, it was possible to filter each column and each worksheet in order to specify how many participants in each group for each MOOC had

- completed the MOOC by completing all activities

- partially completed the MOOC by completing everything except the Workshop, thereby obtaining a Certificate of achievement

- not completing the MOOC and therefore receiving no certificate.

Participant survey

A Moodle Feedback activity was used for the survey and was set to anonymous mode. The MOOC contains a regular 'Feedback after the first week' survey for participants as a way of helping the facilitators gauge the mood after the first week, so questions specific to the research were added to the January 2019 MOOC. These were all mandatory.

- Is this your first time doing the MOOC? (yes/no)

- Which path did you choose? (Step by step/All at once)

- Why did you choose this path? (free text)

- How confident are you that you will complete the MOOC? (Very confident/Quite confident/Not at all confident)

- How well do you understand English? (Very well/quite well/not very well)

As with the completion reports, Moodle offered the option to download the results in Excel-compatible format (csv) and once downloaded, filters were applied to divide the participants into the two groups and analyse the data.

Limitations of the Participant survey:

While everyone who completed or partially completed the MOOC also completed the Participant survey, not everyone who completed the survey then went on to complete or partially complete the MOOC. Therefore, caution should be used when assigning the findings to the group as a whole, since some responses might have been given by those who did not progress further in the MOOC.

Analysing the free text responses

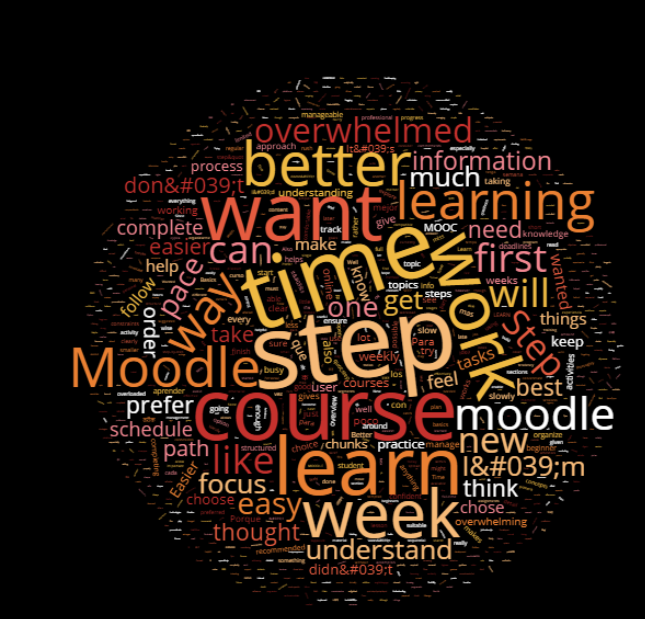

The free text responses to the question "Why did you choose this path?" were initially run through an online text analyser, Online Utility.org. However this was not particularly satisfactory since although the tool could count up frequencies of words and phrases, it could not combine those which had similar meanings for example "I thought it would be easier" and "I chose the simple option"

A wordcloud generated by wordclouds.com using the free text responses of the Step by step group.

Because of this drawback, it was instead decided to read through every text comment and place it in a particular category. The categories were chosen based on the types of responses given, and were added to during the analysis. Filters again allowed for filtering by group and by category. The categories were:

- Easier

- Experienced

- Newbie

- Flexibility

- Full/Detailed

- Time issues

- More time

- Manageable/chunking

- Other

- Overwhelmed

- Own pace

- Want to!

- Non-English*

*Non-native English speakers

Participants were not asked specifically if they were native English speakers, as it was considered that the options in question 5 were adequate. However, for the free text responses in question 3, participants were informed they could answer in their own language. These responses were then filtered by "Non-English", counted up and then their content read and added to the other categories as with English language responses. The short text answers were very straightforward and where I did not myself understand the languages, I used Google translate, justifiable in these circumstances.

Paired discussion with the co-facilitator

When considering joint interviews, Kelly, N., Nesbit, S. and Oliver, C., 2012 quote Seymour et al 1995 that these can be "an effective means to uncover the different kinds of knowledge held by each participant and produce a more comprehensive picture". Because the co-facilitator also has access to the MOOC reports it was decided that, rather than conducting a formal interview with pre-prepared questions, the meeting would be a paired discussion in which she would give her own impressions of the completion results and participant survey and together we would reflect upon their significance and potential usefulness for future MOOC improvements.

The discussion took place on line using Zoom software and was recorded and transcripted.